-

Type:

Bug

-

Resolution: Unresolved

-

Priority:

Low

-

None

-

Affects Version/s: 10.3.13

-

Component/s: JQL Search

-

None

-

10.03

-

Severity 1 - Critical

Issue Summary

The membersOf() JQL function allows users to match a user field against the members of a particular group.

When searching a group with a very large number of users (in our example, 6.2 million users), this causes very high memory pressure (in the same example, ~4.1GB of heap usage).

The impact is made more severe if the user performing the search reattempts the search, assuming that their original request has stalled and will not complete. In reality, the application thread is actually just taking a long time to perform the very expensive request. Each subsequent attempt will create a new thread to do the same work, resulting in elevated load and further heap consumption.

Ideally, this is resolved either through optimization of the function, or implementing some kind of limit/threshold (possibly configurable by the admins) preventing the search from running when the target group contains more users than that limit.

Steps to Reproduce

- Be on a Jira instance which has very many users within a group. Our example is ~6.2 million users in "jira-users".

- Perform any JQL query containing membersOf(). An example:

- assignee in membersOf("jira-users")

- Aggravate the issue by clicking the Search button or reloading the page multiple times

Expected Results

Application will not be impacted any more than if any other reasonable/typical JQL query was ran.

Actual Results

CPU load may increase due to the request being expensive. It may also increase due to the resulting Garbage Collection activity.

Application performance will degrade depending on CPU Load and Garbage Collection pauses.

An OutOfMemoryError will eventually occur. In our case, over 5 minutes later than when repro steps were performed.

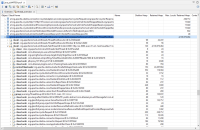

Heap Dump analysis reveals that heap usage is mainly in Thread Locals (and not necessarily an object containing the full list of users in the group)

Workaround

None

Increasing the heap allocation may help provide a buffer from the issue occurring, but the tradeoff in terms of increased resource allocation and GC performance degradation may not justify how easily the issue can be reproduced with just a few more parallel attempts.