Details

-

Bug

-

Resolution: Obsolete

-

Medium

-

None

-

3.0.1

-

None

Description

If you have imported a lot of information into confluence (say via mediawiki importer), then you will have a lot of content that needs to be indexed, which can result in

Exception in thread "DefaultQuartzScheduler_Worker-5" java.lang.OutOfMemoryError: Java heap space at com.mysql.jdbc.TimeUtil.fastTimestampCreate(TimeUtil.java:1134) at com.mysql.jdbc.ResultSetImpl.fastTimestampCreate(ResultSetImpl.java:1030) at com.mysql.jdbc.ResultSetRow.getTimestampFast(ResultSetRow.java:1310) at com.mysql.jdbc.ByteArrayRow.getTimestampFast(ByteArrayRow.java:124) at com.mysql.jdbc.ResultSetImpl.getTimestampInternal(ResultSetImpl.java:6617) at com.mysql.jdbc.ResultSetImpl.getTimestamp(ResultSetImpl.java:5943) at com.mysql.jdbc.ResultSetImpl.getTimestamp(ResultSetImpl.java:5981) at com.mchange.v2.c3p0.impl.NewProxyResultSet.getTimestamp(NewProxyResultSet.java:3394) at net.sf.hibernate.type.TimestampType.get(TimestampType.java:27) at net.sf.hibernate.type.NullableType.nullSafeGet(NullableType.java:62) at net.sf.hibernate.type.NullableType.nullSafeGet(NullableType.java:53) at net.sf.hibernate.type.AbstractType.hydrate(AbstractType.java:67) at net.sf.hibernate.loader.Loader.hydrate(Loader.java:690) at net.sf.hibernate.loader.Loader.loadFromResultSet(Loader.java:631) at net.sf.hibernate.loader.Loader.instanceNotYetLoaded(Loader.java:590) at net.sf.hibernate.loader.Loader.getRow(Loader.java:505) at net.sf.hibernate.loader.Loader.getRowFromResultSet(Loader.java:218) at net.sf.hibernate.loader.Loader.doQuery(Loader.java:285) at net.sf.hibernate.loader.Loader.doQueryAndInitializeNonLazyCollections(Loader.java:138) at net.sf.hibernate.loader.Loader.doList(Loader.java:1063) at net.sf.hibernate.loader.Loader.list(Loader.java:1054) at net.sf.hibernate.hql.QueryTranslator.list(QueryTranslator.java:854) at net.sf.hibernate.impl.SessionImpl.find(SessionImpl.java:1554) at net.sf.hibernate.impl.QueryImpl.list(QueryImpl.java:49) at org.springframework.orm.hibernate.HibernateTemplate$20.doInHibernate(HibernateTemplate.java:731) at org.springframework.orm.hibernate.HibernateTemplate.execute(HibernateTemplate.java:370) at org.springframework.orm.hibernate.HibernateTemplate.find(HibernateTemplate.java:717) at org.springframework.orm.hibernate.HibernateTemplate.find(HibernateTemplate.java:700) at bucket.search.persistence.dao.hibernate.HibernateIndexQueueEntryDao.getNewEntries(HibernateIndexQueueEntryDao.java:43) at com.atlassian.confluence.search.lucene.queue.DatabaseIndexTaskQueue.getUnflushedEntries(DatabaseIndexTaskQueue.java:161) at com.atlassian.confluence.search.lucene.queue.DatabaseIndexTaskQueue.flushQueue(DatabaseIndexTaskQueue.java:144) at com.atlassian.confluence.search.lucene.DefaultConfluenceIndexManager.flushQueue(DefaultConfluenceIndexManager.java:93)

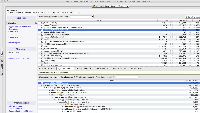

Looking at the heap memory dump (screen shot attached), most of the memory is taken up by the result set return from the database.

This is because we bucket.search.persistence.dao.hibernate.HibernateIndexQueueEntryDao.getNewEntries request all new index entry items:

select * from INDEXQUEUEENTRIES where CREATIONDATE > ? order by CREATIONDATE, ENTRYID;

Which can be huge for customers who have imported a large amount of content in.

We should limit the number of rows that we request, then loop until we have got all entries that need to be indexed.